Whisper_print_timings: total time = 2211.89 ms Whisper_print_timings: decode time = 183.90 ms / 30.65 ms per layer Whisper_print_timings: encode time = 1707.26 ms / 284.54 ms per layer Whisper_print_timings: mel time = 85.55 ms Whisper_print_timings: load time = 221.60 ms main -m models/ggml-base.en.bin -f samples/jfk.wav Whisper_print_timings: total time = 7442.09 ms Whisper_print_timings: decode time = 758.88 ms / 126.48 ms per layer

Whisper_print_timings: encode time = 6228.12 ms / 1038.02 ms per layer Whisper_print_timings: sample time = 0.00 ms Whisper_print_timings: mel time = 123.62 ms Whisper_print_timings: load time = 318.74 ms And so my fellow Americans, ask not what your country can do for you, ask what you can do for your country. Main: processing 'samples/jfk.wav' (176000 samples, 11.0 sec), 4 threads, lang = en, task = transcribe, timestamps = 1. Whisper_model_load: model size = 140.54 MB Whisper_model_load: memory size = 22.83 MB Whisper_model_load: ggml ctx size = 163.43 MB Whisper_model_load: adding 1607 extra tokens Whisper_model_load: mem_required = 505.00 MB Whisper_model_load: loading model from 'models/ggml-base.en.bin'

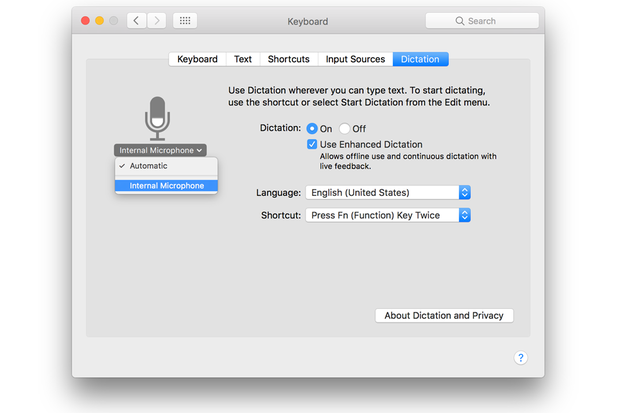

Has a nice streaming input also and some interesting examples. Think I will give it a try on my getting old workstation Intel(R) Xeon(R) CPU E3-1245 and new toy of a Rock5/hardware/5b - Radxa Wiki (OkDo are going to start stocking) as alternatives to Pi are becoming very valid and see what model works before pushing over realtime, doubt it will do the medium model but tiny, base and small to choose from. I don’t know how much faster it is than the openAI source but it is optimised for cpu, but guessing at best it will be the tiny model (which is not that great as its the small model up where Whisper excels). To be honest with stock availability my default raspberry platform is looking ever in doubt, but the above is cpu based. Supported platforms: Linux, Mac OS (Intel and Arm), Windows (MSVC and MinGW), WebAssembly, Raspberry Pi, Android Low memory usage (Flash Attention + Flash Forward) Plain C/C++ implementation without dependenciesĪpple silicon first-class citizen - optimized via Arm Neon and Accelerate frameworkĪVX intrinsics support for x86 architectures High-performance inference of OpenAI’s Whisper automatic speech recognition (ASR) model: Hopefully Whisper CPU performance improves, or … it can run on a Coral TPU? I think many home automation setups already have or want a Coral for running Frigate, would be nice to use for ASR as well. My current config is RPi 4b as satellites, the i7 as base (also running Home Assistant, Node-RED, MQTT, etc… ) I am really happy with the performance of “Hey Mycroft” wake word, Mozilla DeepSpeech for ASR, Fsticufs, and Mimic3 ljspeech TTS (and Node-RED for fulfilment.)ĭeepSpeech seems resilient to music + microwave running in a kitchen with lots of reverberation. $ time whisper canceltimer.wav -model small.en -language en -fp16 False -task transcribe small.en got it right, but took 17 seconds on my CPU. The tiny.en and base.en models transcribed “cancel the timer” to “pencil the timer”. $ time whisper setkitchenlights20percent.wav -model tiny.en -language en -fp16 False Yeah, I have been doing some tests, and the ASR is really good, but on my CPU-only machine, even with the tiny.en model it runs at best realtime, so it takes around 3s to transcribe a 3s utterance, which IMO is too slow for being usable.įor reference, I’m running a base+satellite configuration, with ASR done on the base, an Intel(R) Core™ i7-6700T CPU 2.80GHz with 24 GB RAM as my home server.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed